Introduction: AI Is Entering the Pipeline Quietly, Not Loudly

In 2026, the biggest change in game development is more subtle than the headlines suggest. Instead of replacing entire teams, AI is quietly improving pipelines behind the scenes.

Modern studios must build faster, test thoroughly, understand player behavior early, and optimize post-launch performance. In this environment, AI excels as a supportive layer, enabling better execution across production.

For teams focused on game development services or mobile games, AI is most useful in practical areas like level design, QA automation, analytics, and LiveOps. These parts of the process benefit from faster decision-making and quicker iteration, leading to better quality and stronger long-term results.

This article looks at four main parts of the game development pipeline and explains why studios that use AI thoughtfully in 2026 gain real advantages without getting caught up in the hype.

Why 2026 Feels Different: Studios Want Efficiency, Not AI Theater

AI feels more relevant to game development in 2026, not because of full automation, but because of increased production pressure, higher content expectations, and the need for tighter execution. Studios seek practical pipeline solutions, not replacements.

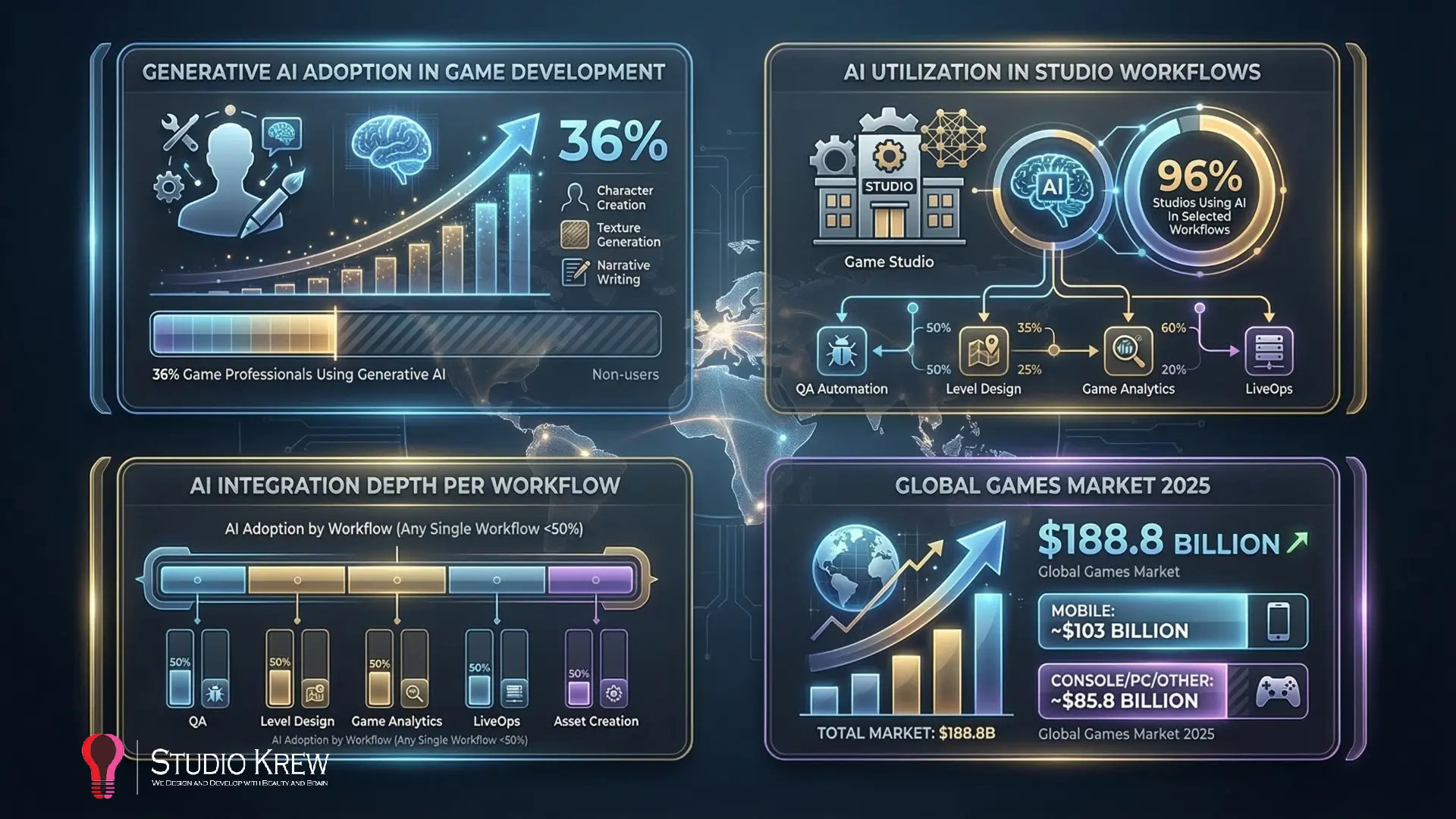

Industry data reflects this shift. GDC’s 2026 State of the Game Industry reports 36% of professionals use generative AI, while Unity’s 2025 report shows 96% of studios integrating AI tools in selected workflows, but less than half use them in any single workflow. Adoption is growing, but measured and selective.

This explains why “AI theater” is fading; studios now focus on tools that speed up shipping, surface issues early, or improve live performance. Unity frames this shift as boosting productivity and agility rather than reinventing development.

The business context also matters. Newzoo reported the global games market reaching roughly $188.8 billion in 2025, with mobile still the largest segment at about $103 billion. In a market that large, even small gains in retention, QA efficiency, progression tuning, or LiveOps timing can have a meaningful commercial impact. That is one reason AI is becoming more useful in support functions such as analytics, testing, and optimization, especially for teams building mobile and service-driven products.

At the same time, 2026 is not a story of blind industry enthusiasm. The same GDC 2026 findings also show significant skepticism toward generative AI, which actually makes this moment more credible, not less. Teams are adopting AI where it helps, questioning it where it does not, and keeping human judgment in the loop where quality, creativity, and player experience still depend on experience. That is a healthier foundation for modern full-cycle game development than either fear or hype.

For a serious game studio, that is what makes 2026 different. AI is no longer interesting just because it is new. It is becoming useful because studios are finally judging it by production outcomes.

Where AI Actually Fits in a Modern Game Development Pipeline

One of the biggest mistakes in the AI conversation is treating game development like a single workflow.

It is not.

A modern game is built from multiple connected stages, each with a different goal, team rhythm, and pressure. Some stages are idea-heavy, some system-heavy, others testing-heavy, and some are driven by player behavior after launch. That’s why AI’s value is uneven across the pipeline. In a few areas, it saves time. In others, it enhances visibility and decision-making, thereby impacting production quality.

Studios that see the most benefit from AI in 2026 understand these differences, identifying where AI removes friction without disrupting the craft rather than applying it everywhere.

Examining Pre-Production: Helpful for Exploration, Weak at Defining What Makes a Game Work

Pre-production is where a game begins to take shape. Teams explore the concept, target audience, core loop, references, progression direction, visual language, and product positioning. This stage looks exciting from the outside, but it is also where many weak ideas are mistakenly polished before they are properly challenged.

AI supports by clustering references, comparing designs, summarizing competitors, and organizing concepts faster. This speeds discovery but does not solve pre-production’s hardest problem: deciding if the game is worth building.

A strong concept still depends on human judgment. Teams must ask: Is the loop engaging? Is the audience clear? Is progression promising? Is there market space? AI supports information processing but cannot replace product instinct.

That is why pre-production is a useful AI support zone, but rarely the stage where AI delivers the most significant operational benefit. Key takeaway: AI can enhance research and organization here, but not replace core human judgment.

Production: This Is Where Workflow Efficiency Starts to Matter

Once production begins, the conversation changes.

Now the team is no longer asking only what the game should be. They are asking how quickly they can turn decisions into playable, testable, improvable content. This is where AI starts becoming much more relevant, especially in workflows that involve repeated iteration.

During production, teams build levels, adjust systems, tune progression, review content, fix pacing, and coordinate design, engineering, art, and production. These aren’t glamorous but are real bottlenecks. Small, repeated inefficiencies can slow a project more than a single major obstacle.

This is where AI starts proving its value. Not by making the game on its own, but by helping teams move through repeated comparisons, repeated pattern checks, repeated balancing decisions, and repeated workflow loops with more speed and context.

The gain is not magic. The gain is momentum. Key takeaway: AI’s true value lies in enabling teams to move faster on repetitive, high-volume tasks, effectively accelerating progress.

QA and Validation: One of the Clearest AI Wins in the Entire Pipeline

If there is one area where AI feels immediately practical, it is QA.

Game testing is repetitive. Teams validate systems across builds, retest fixes, compare interfaces, check logs, reproduce crashes, and run content paths repeatedly. In mobile, online, and cross-platform games, this repetition increases as more devices, updates, and gameplay combinations are introduced.

This makes QA a natural fit for intelligent automation support.

AI can help identify unusual patterns inside logs, group similar crash behavior, support screenshot comparisons, and highlight where repeated validation is likely to uncover issues first. It can also help reduce the noise that often slows down testing teams, especially late in production or during live operations.

That does not make human QA less important. In fact, it makes human QA more valuable where judgment matters most. A machine can help flag anomalies. It cannot fully judge whether a mechanic feels fair, whether progression feels frustrating, or whether a player-facing bug is minor or experience-breaking in the real context of play.

So in QA, AI works best as a force multiplier. It handles more of the repetitive scanning, so human testers can spend more time on meaningful evaluation.

Launch Preparation: AI Helps Reduce Last-Minute Friction, Not Eliminate It

As launch approaches, the entire pipeline becomes more sensitive.

At this point, teams are managing stability, performance, compliance requirements, bug prioritization, storefront readiness, build validation, and cross-device reliability. The closer a product gets to launch, the more expensive late surprises become. A single overlooked issue can create a poor first impression, delay release readiness, or damage player trust early.

AI is not central here, but it can help with triage, surface patterns, assist final validation, and show which problems matter most based on test data.

That matters even more when the game is being prepared for multiple release environments. A cross-platform game development pipeline is already more complex than a single-platform production cycle, and that complexity increases again when compliance, performance, and polish must be maintained across multiple versions of the same product.

In other words, AI does not remove launch pressure. It helps teams navigate it with better visibility.

Post-Launch: This Is Where AI Becomes Operationally Powerful

Many teams still think of AI mainly as a pre-production or content-generation topic. In reality, some of its strongest values appear after the game goes live.

That is because a live game produces something development teams never have enough of before launch: real player data.

Once players enter the ecosystem, the game starts generating telemetry around onboarding, progression, churn, retention, monetization behavior, content engagement, session patterns, event performance, and feature drop-off. This creates a much richer signal environment than internal testing alone can provide. The challenge is no longer collecting data. The challenge is interpreting it quickly enough to make better decisions before the next update cycle.

This is where AI becomes genuinely useful for modern studios. It can help teams identify friction points earlier, spot unusual cohort behavior, surface retention risks, and interpret performance signals that would otherwise remain buried in dashboards. That is one reason AI is becoming increasingly relevant to LiveOps, analytics, and ongoing optimization for games that are expected to evolve after launch.

This is especially important for an online game development company, where launch is not the finish line. It is the point where operational intelligence becomes part of the product itself.

The Real Opportunity Is Not “AI Everywhere,” It Is “AI in the Right Places.”

The most effective studios are not asking whether AI should be used everywhere in the pipeline.

They are asking where it creates enough value to justify trust, integration, and team adoption.

In 2026, the clearest answers are usually not in replacing creative leadership. They are in helping teams where the workflow is already under pressure, where repetition is high, where data is dense, or where post-launch decisions need to happen faster.

That is why this article focuses on four areas where AI is quietly reshaping pipeline performance in a meaningful way:

The Four Areas This Blog Will Focus On

Level design support

AI can help teams test variations, review pacing, and explore progression patterns more quickly, while maintaining designer control.

QA automation

AI can support repetitive validation, anomaly detection, crash grouping, and test prioritization, especially in build-heavy environments.

Analytics insights

AI can help translate player telemetry into more actionable product decisions, instead of leaving teams buried in dashboards.

LiveOps optimization

AI can support smarter event timing, player segmentation, update analysis, and retention-focused post-launch decisions.

These four areas matter because they sit close to real production outcomes. They affect how fast a team can iterate, how well a game can be tested, how clearly player behavior can be understood, and how effectively a live product can improve over time.

That is the real shape of AI in modern game development. Not a dramatic takeover, but a quieter operational layer that helps studios design better, test faster, learn sooner, and optimize with more confidence.

AI in Level Design: Faster Iteration Without Losing Designer Control

Level design is one of the clearest examples of where AI can be useful without becoming the creative owner of the work.

That distinction matters. Good level design is not just about placing obstacles, enemies, rewards, or routes inside a playable space. It is about shaping tension, teaching mechanics, controlling pacing, guiding attention, and making players feel challenged without feeling confused. Those decisions are rarely mechanical. They depend on player psychology, product intent, and the kind of experience the team wants the game to deliver.

This is exactly why AI works best in level design as a support layer, not as a replacement for designers.

The Real Problem in Level Design Is Not Ideas, It Is Iteration

Most game teams do not struggle because they have zero ideas for levels. They struggle because turning a promising idea into a well-balanced, enjoyable, production-ready level takes repeated iteration.

A level may look correct on paper and still fail in play. It may introduce a mechanic too early, create a pacing drop in the middle, overwhelm the player with too many signals, or become predictable faster than expected. In mobile games, the challenge becomes even sharper because levels often need to deliver clarity, reward, and momentum within much shorter session windows.

This is where AI starts becoming useful.

Instead of treating each level revision as a slow manual cycle, teams can use AI-assisted workflows to review patterns faster, compare variations more efficiently, and identify sections that may need more attention before the next round of playtesting. The benefit is not that AI “designs the level better” on its own. The benefit is that it helps the design team reach stronger decisions with less wasted movement.

AI Helps Teams Explore More Layout Variations Without Slowing Down the Pipeline

One hidden cost in level design is that teams often settle too early.

Not because the first layout is best, but because exploring five or ten more versions takes time, communication, testing effort, and production bandwidth. As a result, some promising alternatives never get thoroughly explored. AI can help here by enabling faster analysis of variation in structure, route flow, encounter spacing, hazard placement, progression rhythm, or challenge sequencing.

That gives designers a wider range of options without bloating the pipeline.

For example, a team designing a puzzle sequence, combat room, endless-runner segment, or mission flow may want to test multiple versions of difficulty ramping, reward timing, or obstacle density. AI can help compare those variations in a more structured way, making it easier to understand where friction is increasing too sharply or where a section may be under-challenging.

In practice, this means the designer retains control over intent, while AI helps expand the exploration window.

Pacing Is Where AI Can Quietly Add a Lot of Value

A good level is rarely memorable because it is simply harder or longer. It is memorable because it moves well.

Pacing is one of the most delicate parts of level design. It controls how quickly the player learns, how often they feel rewarded, when pressure rises and eases, and how the overall rhythm of a stage supports retention rather than fatigue. Poor pacing creates levels that feel flat, unfair, repetitive, or exhausting. Strong pacing makes even simple mechanics feel more satisfying.

AI can be useful here because pacing problems often show up as patterns.

A section where too many players slow down, fail repeatedly, abandon the level, or lose interest may not always be obvious from intuition alone. AI-assisted analysis can help surface those patterns earlier, especially when teams are reviewing internal test sessions or live player behavior. That makes it easier to spot difficulty spikes, dead zones, overly empty stretches, or level segments where the design intention isn’t landing as the team expected.

This becomes particularly valuable for a mobile game development company, where session flow is tightly tied to retention. If a level feels too slow, too unclear, or too punishing at the wrong moment, the player may not come back for the next session.

AI Can Support Progression Balancing, but Designers Still Define the Experience

Level design is not only about one stage at a time. It is also about the relationship between stages.

A progression system starts to fail when levels feel too similar, when challenge ramps too aggressively, when mechanics are introduced without enough reinforcement, or when players stop feeling forward momentum. These are design problems, but they are also pattern problems. That is why AI can support balancing by helping teams review larger progression structures more clearly.

It can help answer questions like:

- Where does difficulty rise too sharply?

- Which levels are likely to create repeated player drop-off?

- Where does mechanical repetition begin to feel stale?

- At what point does the reward rhythm weaken?

- Which content segments are underperforming compared to the rest of the progression track?

These are valuable signals, but they are still only signals. The actual design response has to come from humans. A designer may intentionally keep a level harder to create mastery. A producer may preserve a slower level because it gives emotional contrast. A product team may choose to rebalance later because the current friction supports monetization or long-term engagement goals.

So AI can support progression balancing, but it cannot define the emotional logic behind progression. That still belongs to the team.

AI Is Especially Useful in System-Heavy and Content-Heavy Games

The larger the ecosystem becomes, the more valuable intelligent support becomes.

In games with many levels, recurring systems, procedural variations, live content drops, or event-based missions, the challenge is no longer just designing one strong level. The challenge is maintaining quality across a growing content base without letting inconsistency creep in. That is where AI becomes more operationally useful.

A studio working on puzzle progression, action-stage sequencing, multiplayer maps, endless-content structures, or seasonal event content may need to review far more variables than one designer can comfortably track instinctively. AI can help teams spot recurring patterns, compare performance across content types, and identify when the structure becomes too safe, too noisy, or too uneven.

This is particularly relevant for teams building large-scale content workflows in engines such as Unity and Unreal, where rapid iteration is a major strength. For a Unity game development company or an Unreal Engine game development company, that speed is a major advantage, but it also increases the need for stronger review systems around pacing, variation, and progression quality.

In other words, the more scalable the production pipeline becomes, the more valuable AI becomes as a support layer for maintaining that scale.

What AI Still Cannot Do Well in Level Design

This is the part many AI-focused articles skip, but it is important.

AI can support iteration. It can support comparison. It can support a balancing review. But it still cannot fully own the deeper creative decisions that make level design feel intentional.

It cannot reliably decide what makes a level emotionally satisfying. It cannot fully understand when friction feels meaningful versus frustrating. It cannot naturally judge whether a section feels memorable, clever, oppressive, playful, surprising, or boring, as an experienced designer can. It can detect patterns, but it cannot fully replace an authored experience.

That means teams should be careful not to confuse volume with quality.

A pipeline that generates more level options is only useful if the team still knows how to choose the right ones. Otherwise, AI just creates more material to review, not a better player experience.

The Best Use of AI in Level Design Is to Strengthen Designer Bandwidth

The smartest studios are not using AI in level design to remove designers from the process. They are using it to protect designer bandwidth.

That is a much more valuable goal.

Designers should spend less time manually sorting through repetitive variations and more time making high-value decisions about challenge, clarity, reward, emotion, progression, and player learning. AI can help create that shift when it is used carefully. It can reduce the drag around iteration, surface useful signals earlier, and help teams move into stronger playtesting rounds with better-informed assumptions.

For studios delivering broader game development services or supporting more connected products through online game development company workflows, this can become a real operational advantage. Better level iteration does not just improve design quality. It can also shorten wasteful cycles across production, QA, analytics, and post-launch tuning.

That is the quiet value of AI in level design.

Not automated creativity, but accelerated refinement.

AI in QA Automation: Catching More Problems Before Players Do

QA is one of the clearest areas where AI can create practical value in a modern game pipeline.

That is because testing is inherently repetitive. Teams recheck the same flows across new builds, validate fixes after each update, compare interface states across devices, investigate logs, and make sure one small change has not broken something somewhere else. As games become more content-heavy, update-driven, and service-oriented, that workload grows quickly. This is where AI becomes useful, not as a replacement for QA, but as a way to make testing workflows more intelligent and more scalable.

Why Manual-Only QA Is No Longer Enough

Manual QA is still essential, but manual-only QA struggles to keep pace with how modern games are built and maintained.

A game today may need to function across multiple devices, operating systems, screen sizes, patch versions, monetization states, event layers, and backend conditions. Once the game is live, the testing burden expands even further. Every content update, balance change, store revision, ad integration, or LiveOps event creates new opportunities for regressions and unexpected behavior.

That means the challenge is no longer simply to find bugs. It is to find the right bugs early, understand which issues matter most, and do so at a pace that matches the release cycle. For many teams, especially those working in fast-moving pipelines, manual-only QA starts becoming too slow, too repetitive, and too difficult to scale efficiently.

What AI-Assisted QA Can Actually Help With

This is where AI-supported QA starts becoming genuinely useful.

One of its strongest roles is in validating gameplay paths in repetitive gameplay. Critical flows such as tutorials, progression checks, reward claims, store behavior, and event participation often need to be tested repeatedly across builds. AI-assisted workflows can help identify deviations, surface irregular outcomes, and support more reliable repeated validation.

AI is also helpful in crash grouping and log analysis. Instead of forcing teams to manually read large volumes of raw issue data, it can help cluster similar crashes, highlight recurring anomalies, and make triage more efficient. In the same way, it can support visual and interface comparison, helping teams catch unusual differences across screens, device states, or repeated UI flows that may otherwise be missed under testing pressure.

Another important contribution is test prioritization. QA teams do not need equal scrutiny on every output at every moment. AI can help highlight likely regression risks, unstable states, or repeated failure patterns, so testers can focus more energy where human review matters most.

In a strong pipeline, this does not make QA fully automated. It makes QA more selective, more informed, and better equipped to keep up with production speed.

What Still Needs Human QA

AI can support validation, but it cannot replace judgment.

It cannot reliably decide whether a mechanic feels fair, whether onboarding feels frustrating, whether a progression block is meaningful or simply annoying, or whether a visual issue is minor on paper but damaging to player trust in practice. It also cannot fully replace the instinct experienced testers bring when they explore edge cases, challenge systems in unintended ways, or notice when something technically works but still feels wrong in actual play.

That is why human QA remains central to quality.

The strongest use of AI in testing is not to remove testers from the loop. It is to reduce the repetitive scanning and sorting that drains their time, so they can focus more on feel, fairness, player experience, exploit risks, and the kinds of issues that require human interpretation.

AI Helps QA Teams Move From Bug Volume to Bug Priority

One of the biggest challenges in QA is not just finding issues, but also addressing them. It is deciding which issues need attention first.

A modern game build can produce a large number of signals at once, from crash reports and UI inconsistencies to progression breaks, event-state problems, and backend-linked errors. If every issue is treated with the same urgency, teams can lose time on noise while more damaging problems remain unresolved. This is where AI becomes especially useful as a prioritization layer.

By helping group similar failures, highlight repeated instability, and surface patterns around critical player-facing flows, AI can support faster triage. That allows QA leads, producers, and developers to focus first on the issues most likely to affect player trust, retention, monetization, or release readiness. In practice, this makes the QA process not just faster, but more aligned with product impact.

Why This Matters Most for Live Mobile Titles

This matters especially in live mobile games, where testing pressure is constant, and the room for error is small.

A mobile game development company often has to deal with device fragmentation, frequent updates, changes in monetization, event builds, UI sensitivity, and player journeys critical to retention. A bug in a reward flow, store state, event screen, or onboarding sequence can affect not only quality but also player trust, return sessions, and commercial performance.

That pressure increases even further in connected, update-driven environments, which is why these QA advantages also matter across broader game development services and multiplayer and online products. But mobile titles feel this pressure earliest and most consistently because their release cadence, user expectations, and content cycles leave less room for repeated manual-only testing.

That is the real value of AI in QA automation.

Not fewer testers, but smarter quality control that helps teams catch more problems before players do.

AI in Game Analytics: Turning Telemetry Into Better Product Decisions

Game teams rarely struggle because they lack data. More often, they struggle because they have too much of it and not enough clarity around what to do next.

A live game generates a constant stream of information, from session length and progression behavior to churn signals, feature usage, event participation, and monetization response. All of that data should make decision-making easier. But in many pipelines, it does the opposite. Teams end up surrounded by dashboards while still debating which issue matters most, which signals are noise, and which patterns actually deserve action.

This is where AI starts becoming genuinely useful in game analytics. Its value is not in producing more reports. Its value is in helping teams get from telemetry to product decisions faster.

From Dashboards to Decisions

Traditional analytics setups are good at showing what happened. They are often much weaker at helping teams understand why it happened and what should happen next.

A dashboard can show that retention dropped, that a feature underperformed, or that players are leaving earlier than expected. But product teams still have to interpret those patterns, connect them to player behavior, and decide whether the problem is tied to onboarding, pacing, progression, reward timing, or something else entirely. That takes time, and when teams are under pressure, time is exactly what they do not have much of.

AI helps reduce that gap. Instead of only presenting numbers, it can help organize patterns, highlight likely causes, and make it easier to identify where a team should focus first. That turns analytics into something more useful than passive reporting. It becomes an active decision-support layer inside the game development pipeline.

What AI Can Surface Faster

The biggest advantage AI brings to analytics is speed in pattern recognition.

It can help teams identify churn-risk behavior, unexpected funnel drop-offs, low-performing features, progression friction, reward imbalance, and unusual cohort movement much earlier than a manual review cycle often would. It can also reveal when a problem that looks simple on the surface is actually connected to something deeper.

For example, weak conversion may not always be a monetization issue. It may be caused by players failing to reach the right progression moment. A tutorial drop-off may not be only a UX problem; it may be tied to overload, unclear goals, or poor early pacing. A live event may seem average overall, performing strongly in one segment and poorly in another.

These are the kinds of connections that matter in real production. AI does not replace human interpretation, but it can surface the signals faster, so teams are not reacting too late.

Practical Use Cases

This is where analytics becomes operationally valuable.

AI-supported analytics can help teams redesign tutorials when early-session behavior shows confusion or weak momentum. It can support progression tuning by identifying where players repeatedly stall, disengage, or lose motivation. It can improve event planning by showing which content formats actually drive repeat sessions rather than just creating short-lived spikes. It can also make monetization decisions smarter by connecting purchase behavior to progression state, feature engagement, and reward timing rather than looking at revenue events in isolation.

These use cases matter because they are tied directly to the product, not just the reporting layer. They influence how a game is refined after launch and how future updates are prioritized.

For a mobile game development company, this is especially important because small moments of friction can have an outsized impact on retention. For teams building more connected products through game development services, better analytics does not just improve visibility. It improves the quality of decisions being made across design, product, and LiveOps.

Why This Matters for Studios Selling Outcomes

Studios are not hired simply to ship features. They are increasingly expected to help games perform better over time.

That means analytics can no longer sit quietly in the background as a reporting function. It has to contribute to measurable outcomes, whether that means stronger retention, healthier monetization, better event response, smarter progression, or a clearer roadmap for future updates. AI becomes valuable here because it helps teams move from raw telemetry to faster product intelligence, thereby improving their response time.

This matters even more in dynamic environments such as multiplayer and live online products, where player behavior shifts faster, and the cost of slow interpretation is higher. A studio that can read signals sooner and act with more confidence is in a much stronger position than one that is still trying to manually decode every dashboard trend after momentum has already slipped.

That is the real value of AI in game analytics.

Not more numbers, but clearer signals that help studios make better product decisions and deliver better outcomes over time.

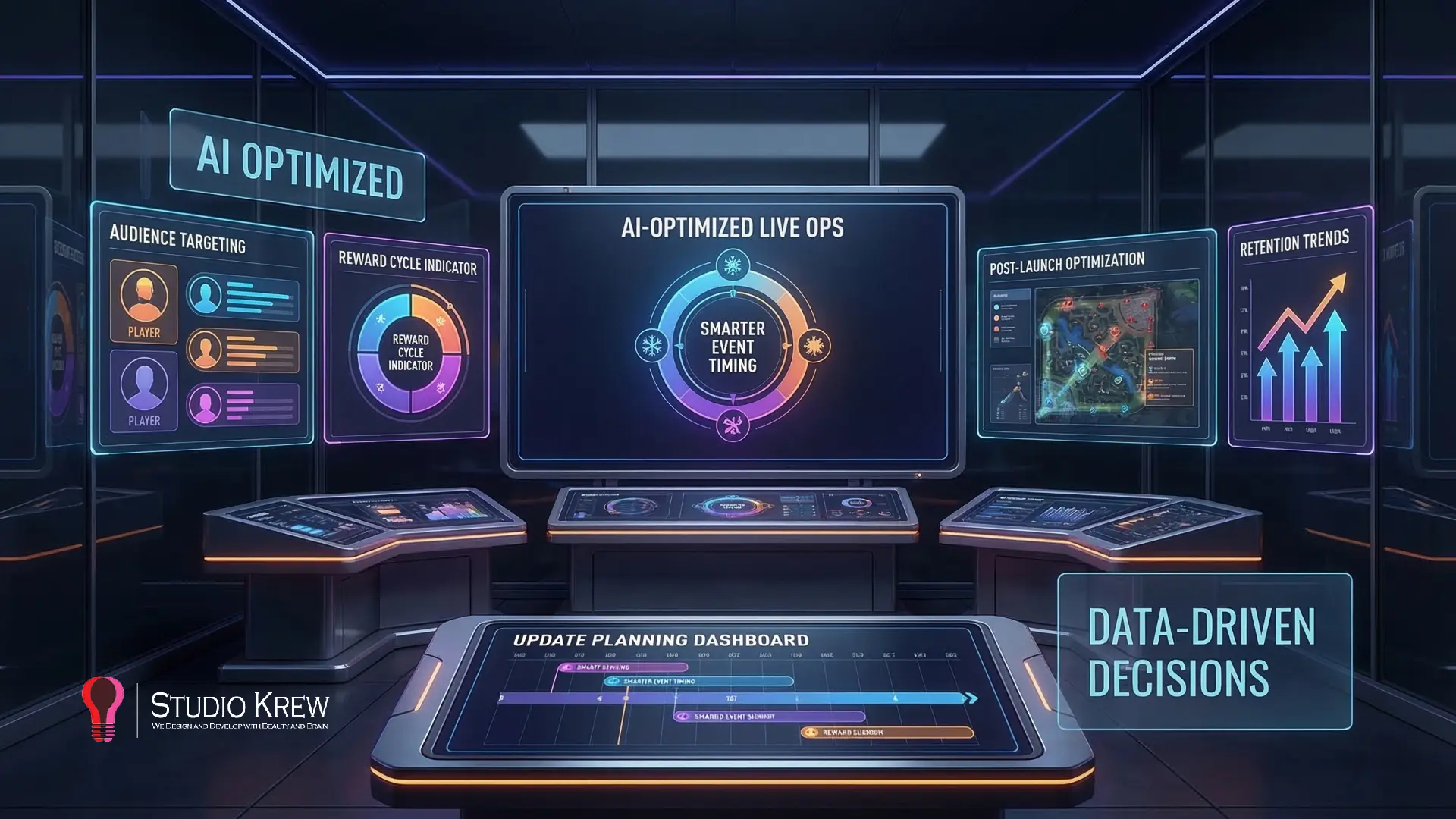

AI in LiveOps Optimization: Better Events, Better Timing, Smarter Retention

LiveOps has become one of the most important layers in modern game development, especially for mobile, online, and service-driven titles. A game is no longer judged only by how polished it feels at launch. It is also judged by how well it stays relevant after launch through events, content refreshes, progression updates, offer timing, and player re-engagement.

This is one of the reasons AI is becoming more useful in game production pipelines. Once a game is live, teams no longer work solely on assumptions. They are working with real player behavior, real event data, and real retention signals. That creates an environment where AI can support better timing, stronger segmentation, and more informed LiveOps decision-making without taking strategy away from the team.

Why LiveOps Is Now Central to Game Growth

LiveOps is no longer a post-launch add-on. For many successful games, it is part of the product’s long-term growth engine.

A well-planned LiveOps strategy helps maintain player interest, improve return sessions, support monetization, and extend the life of content that would otherwise lose momentum too quickly. Seasonal events, progression resets, challenge systems, reward loops, and time-limited content all help keep the game feeling active. But their real value depends on how well they are timed and how well they match player expectations.

This matters even more in mobile and online environments, where player attention is fragmented, and content fatigue can set in quickly. A strong live game is not simply adding more events. It is creating the right reasons for players to come back.

That is why LiveOps now sits much closer to retention and revenue than many teams expected a few years ago.

Where AI Helps LiveOps Teams

AI is most useful in LiveOps when it helps teams make better operational decisions from behavior patterns that would otherwise take longer to spot.

One clear use case is event timing recommendations. Not every event performs poorly because the content is weak. Sometimes the issue is timing. Players may not yet be invested enough, may already be experiencing fatigue, or may be at the wrong stage of progression. AI can help teams read those patterns more clearly and identify when engagement conditions are stronger.

Another useful area is player segmentation. LiveOps becomes much more effective when players are not treated as one large group. Some are highly engaged, some are at risk of leaving, some respond well to competitive loops, and some engage more through reward-driven progression. AI can help surface these patterns and make LiveOps planning more targeted.

AI is also useful for providing personalization support and for comparing content performance. It can help teams understand which event types, update structures, or reward patterns are performing well across different cohorts and which are losing impact. Over time, this helps reduce guesswork and makes LiveOps cycles more intentional.

Finally, AI can support the detection of retention trends. It can highlight where player motivation is weakening, where event response is declining, or where progression and LiveOps are no longer working effectively together. That gives teams a better chance to act before a trend becomes a larger performance problem.

For a game liveops service company, these are the kinds of operational gains that make LiveOps smarter, not just busier.

What AI Cannot Do on Its Own in LiveOps

AI can improve visibility, but it cannot replace product judgment.

It cannot fully understand what makes an event feel exciting rather than repetitive. It cannot define the game’s reward philosophy. It cannot decide how aggressive or generous a LiveOps cycle should feel from a player-trust perspective. It also cannot fully understand the brand tone, community mood, or whether a particular update may create short-term engagement while weakening the game’s long-term identity.

Those decisions still depend on human teams.

A good LiveOps strategy needs creative judgment, economic awareness, community sensitivity, and a clear understanding of the type of player experience the game wants to build over time. AI can support that process by surfacing stronger signals, but it should not be treated as the owner of the strategy.

In practical terms, AI can help teams see more. It cannot decide what kind of relationship the game should build with its players.

Why This Matters for Mobile, Online, and Multiplayer Games

LiveOps becomes increasingly important as the game becomes more connected, update-driven, and dependent on long-term engagement.

For a mobile game development company, even small improvements in event timing, retention strategy, or update sequencing can significantly affect player return rates. Mobile products often have limited room for weak timing or repetitive content structures.

For an online game development company, LiveOps is part of the ongoing product loop. Launch is only the starting point. The game continues to be evaluated through new content, timed systems, balance changes, and response to player behavior.

In multiplayer environments, the stakes rise even further. A multiplayer game development company has to think not just about individual user engagement, but also about competitive fairness, community participation, rank motivation, and the wider effect of live events on the player ecosystem. Poorly timed or poorly balanced updates can create much larger ripple effects than in a purely offline game.

This is why AI is becoming valuable in LiveOps. Not because it replaces strategy, but because it helps teams make faster, better-informed post-launch decisions in environments where timing, relevance, and retention matter constantly.

What AI Still Cannot Fix in Game Development

For all the value AI can bring to level design, QA automation, analytics, and LiveOps, it still does not solve the hardest problems in game development.

That is an important point, because this is where many AI discussions lose credibility. Once the conversation becomes too tool-focused, it starts sounding as if production quality is mainly a matter of workflow speed. It is not. Faster pipelines help, but they do not automatically create better games.

Some problems in game development are not efficiency problems. They are product, creative, and judgment problems.

AI Cannot Rescue a Weak Game Concept

If the core idea is not strong, AI will not save it.

A game that lacks a clear audience, a compelling core loop, or a meaningful reason for players to return will still struggle, even if the team uses smarter tools around testing, analytics, or content operations. AI may help the team move faster, but it cannot create market fit where none exists. It cannot achieve product clarity if the game’s foundation remains unclear.

In other words, AI can improve execution, but it cannot replace the importance of building the right game in the first place.

AI Cannot Create a Strong Core Loop on Its Own

The core loop is the heartbeat of the game.

It defines what the player does repeatedly, why they enjoy doing it, and what makes that repetition feel rewarding rather than stale. If that loop is weak, repetitive in the wrong way, or disconnected from long-term motivation, the game will feel hollow no matter how much optimization is applied to it.

AI can help teams analyze player behavior around that loop. It can help surface drop-off, friction, or imbalance. But it cannot reliably decide what makes the loop genuinely satisfying. That still depends on design instinct, playtesting judgment, genre understanding, and a clear sense of player psychology.

A game does not become engaging because it is more automated. It becomes engaging because the central experience feels worth repeating.

AI Cannot Replace Taste, Direction, or Creative Intent

One of the biggest misunderstandings about AI in game development is treating efficiency and authorship as interchangeable.

They are not.

A game needs tone. It needs pacing. It needs a reason for its world, mechanics, progression, art direction, and reward systems to feel like they belong together. Those choices come from creative intent. They come from teams that know what experience they are trying to build and what kind of emotional response they want players to have.

AI can support iteration around that vision, but it cannot fully define the vision itself.

It cannot tell a team what should feel playful versus intense, minimal versus rich, forgiving versus demanding, or niche versus mass-market. Those are not just production decisions. They are creative decisions that remain deeply human.

AI Cannot Fix Poor Monetization Judgment

Monetization problems are rarely solved by more data alone.

A game can have strong dashboards and still make poor monetization choices if its offer design feels intrusive, its progression pressure feels manipulative, or its reward economy weakens trust. AI can help teams identify conversion patterns and surface behavioral signals, but it cannot decide what kind of monetization relationship the product should build with players.

That requires judgment.

A healthy monetization model needs to balance business goals with player experience, fairness, pacing, and long-term retention. If that balance is weak, AI may help the team identify symptoms faster, but it will not solve the underlying problem with the product philosophy.

AI Cannot Turn Shallow Progression Into Meaningful Retention

Progression is one of the easiest systems to expand, but one of the hardest to make meaningful.

A game can always add more levels, more rewards, more currencies, more missions, or more event layers. But if progression does not create a real sense of momentum, mastery, identity, or anticipation, players will eventually feel the emptiness underneath the volume.

AI can help teams measure where progression is underperforming. It can help surface where players are stalling or disengaging. But it cannot automatically transform shallow progression into something emotionally rewarding. That depends on how well the team understands motivation, pacing, challenge, and the design of long-term engagement.

More progression content is not the same as stronger progression design.

AI Cannot Replace Player Empathy

This may be the most important limit of all.

Great game teams do not only understand systems. They understand players. They understand when a level feels frustrating, when a reward feels generous, when a mechanic feels unclear, when an event feels exhausting, and when a small bug damages trust far more than expected. That kind of understanding is not just analytical. It is empathetic.

AI can help identify patterns around frustration or churn. But it does not experience confusion, delight, boredom, or relief the way a player does. It cannot fully replace the human ability to sense when something technically works but emotionally falls flat.

That is why strong game development still depends on teams that can think beyond data and into player experience.

The Best Studios Use AI to Support Judgment, Not Escape It

The most mature way to use AI in game development is not to treat it like a substitute for hard decisions.

It is to use it where it improves visibility, reduces wasted effort, and strengthens the team’s ability to make better calls. That means AI can absolutely improve the pipeline. It can help designers iterate faster, QA teams scale better, analytics become more useful, and LiveOps become more informed. But the game still rises or falls on the quality of human decisions around concept, loop design, progression, tone, fairness, and player trust.

That is the real balance smart studios are aiming for in 2026.

Not AI instead of judgment.

AI in support of better judgment.

How Smart Studios Are Using AI Without Breaking Their Pipeline, and Where StudioKrew Fits

The studios getting real value from AI in 2026 are usually not the ones trying to redesign their entire production model around it. They are the ones introducing it carefully, where the gain is measurable, and the creative risk is low.

That approach matters because game pipelines are fragile in a very specific way. A tool that promises speed can easily create confusion if it adds too many review layers, weakens ownership, or floods teams with output that still needs heavy human filtering. AI only becomes useful when it fits the pipeline, respects the craft, and improves how teams already work rather than disrupting them for novelty’s sake.

Start With One Workflow, Not Everything at Once

One of the most common mistakes teams make is trying to apply AI across too many production functions at once.

In theory, that sounds ambitious. In practice, it usually creates noise. Teams end up testing multiple tools, changing review habits too quickly, and struggling to understand where the actual value is coming from. A much better approach is to start with a single workflow where the problem is already clear.

That could be repeated QA validation, early level pacing review, telemetry interpretation, or LiveOps segmentation. The point is not to “adopt AI” as a broad statement. The point is to solve a specific production bottleneck first. Once that value becomes visible, the team can expand with greater confidence.

The studios that scale AI well usually treat it like any other production improvement. They test it in a defined environment, learn where it helps, and only then widen its role.

Keep Human Approval in the Loop

AI can accelerate analysis, pattern recognition, and repetitive workflows. It should not remove human accountability from the pipeline.

Game quality still depends on designers who understand pacing, QA professionals who understand player-facing risk, producers who understand execution trade-offs, and product teams who understand what the game is trying to become over time. If AI is allowed to push output directly into the pipeline without thoughtful review, speed starts coming at the cost of trust.

That is why the smartest studios keep approval loops clear.

Humans still make the final calls on level structure, progression tuning, bug severity, LiveOps strategy, and product trade-offs. AI helps narrow the search space, highlight useful signals, and reduce repetitive effort, but ownership remains with the team. This is what keeps AI from becoming disruptive in the wrong way.

Measure Impact in Terms of Output, Not Novelty

A lot of AI adoption fails because teams measure enthusiasm instead of outcomes.

It is easy to say a tool is exciting. It is much more useful to ask whether it helped the team ship cleaner builds, reduce repeated QA effort, improve level iteration speed, detect churn patterns earlier, or make stronger LiveOps decisions. Those are the kinds of results that matter inside a real production environment.

If the tool creates more review work than it removes, the value is questionable. If it speeds up a task but weakens quality, the gain is superficial. If it helps the team move faster and make better decisions with less waste, then it has earned a place in the pipeline.

The strongest studios are not impressed by AI because it sounds advanced. They are interested in whether it improves execution.

Use AI Where the Signal Is Strong

AI works best in areas where patterns repeat, data is dense, and review cycles are already heavy.

That is why it tends to fit naturally in QA, analytics, LiveOps, and certain parts of level design. These are functions where the team is not looking for random inspiration. They are looking for stronger visibility, faster interpretation, and more efficient iteration. The signal is already there. AI simply helps surface it sooner.

By contrast, AI tends to create less dependable value in places where product identity, creative direction, or emotional design intent matter more than pattern detection. That does not mean it has no role there, but it does mean teams should be far more careful about the authority they grant it.

In other words, the best use of AI is usually not where the conversation is loudest. It is where the operational signal is strongest.

Where StudioKrew Fits in This Shift

StudioKrew’s most credible place in this shift is not as a company chasing AI hype. It is as a team that can apply AI to strengthen production without weakening the judgment behind the product.

That means treating AI as an operational advantage inside practical workflows, not as a replacement for game thinking. In the context of AI integrated game development, that approach fits naturally with the way modern pipelines actually work. Teams still need strong design direction, strong QA discipline, strong LiveOps planning, and strong delivery execution. AI becomes useful when it helps those functions perform better together.

This is especially relevant for projects that sit close to mobile, online, and post-launch complexity. A game studio working across content-heavy or service-driven products needs efficient iteration, better production visibility, and faster response to player behavior after launch. The same is true in broader full-cycle game development, where success is not defined only by building the game, but by how well the pipeline supports launch, learning, and long-term improvement.

That is where StudioKrew fits in; not as “AI-first,” but as pipeline-aware, execution-focused, and practical about where AI creates real value.

That is also what makes this approach more believable.

The goal is not to force AI into every stage of game production. The goal is to use it to help teams ship with more clarity, test with more confidence, and optimize with better intelligence over time.

What This Means for Businesses Hiring a Game Development Partner

For businesses evaluating a game development partner in 2026, the AI question should not be, “Do they use AI?” The better question is, “Do they know where AI genuinely improves production, and where it should stay secondary to human judgment?”

That distinction matters because many companies can now mention AI in a pitch. Far fewer can explain how it actually improves execution across design, QA, analytics, and post-launch operations. For a buyer, that difference is important. A partner that understands pipeline maturity will usually be far more valuable than one that only presents AI as a trend marker.

Look Beyond AI Claims and Ask About Pipeline Maturity

A strong game partner should be able to explain how work moves from concept to launch and beyond.

That means being clear about how levels are iterated, how testing is structured, how data is interpreted after release, how LiveOps is handled, and how post-launch learning feeds back into the product. If AI is part of that process, it should appear as a support layer inside those workflows, not as a vague selling point disconnected from real delivery.

For buyers, this is often the biggest signal of quality. Studios that can clearly explain their pipeline are usually much better prepared to manage complexity than those that rely on generic innovation language.

Mobile and Online Products Benefit the Most From Structured AI-Assisted Workflows

The more dynamic the game, the more useful structured AI support becomes.

A mobile game development company often faces tighter retention windows, higher iteration pressure, greater device diversity, and stronger post-launch optimization needs than many teams expect. The same applies to an online game development company, where the product continues to evolve through updates, telemetry, events, and ongoing player feedback after launch.

In these environments, AI can support faster QA validation, clearer analytics interpretation, and better LiveOps timing. But for that to be meaningful, the studio has to know how to connect those insights back to product decisions. That is why workflow discipline matters more than AI language alone.

Ask How the Studio Handles QA, Analytics, and LiveOps, Not Just Build Delivery

Many buyers still evaluate a game partner primarily on visual quality, familiarity with the engine, and the ability to build the requested feature set. Those things matter, but they are not enough on their own.

A stronger evaluation process should also ask:

- How is QA structured across repeated builds and updates?

- How will analytics be used after launch?

- How does the studio handle LiveOps planning and post-launch iteration?

- How are design, testing, and player-behavior insights connected?

- Where does human review remain central in the process?

These questions reveal whether the studio is prepared only to produce a game or also to help that game perform.

That is especially important for projects that need long-term engagement, recurring updates, or retention-sensitive design.

Engine Expertise Still Matters, but It Should Connect Back to Outcomes

Technical engine capability remains a core factor in selecting the right partner. A team may be highly effective in one production environment and less suitable in another.

That is why buyers should still carefully evaluate engine strengths, whether they are looking for a Unity game development company for faster mobile and cross-platform execution or an Unreal Engine game development company for projects that demand a different production and visual strategy. But engine expertise should not be treated as an isolated checkbox. It should connect back to how the team handles iteration, testing, optimization, and long-term support.

A studio’s real value is not only in what engine it uses. It is in how well it turns engine capability into a stable and scalable product pipeline.

The Best Partners Think Beyond Launch

For many businesses, the biggest mistake in studio selection is thinking too narrowly about the initial release.

In reality, some of the most important decisions happen after the game is already live. Retention patterns, update priorities, content sequencing, economy adjustments, event timing, and quality control all shape whether the product actually grows. That is why businesses should look for partners who think beyond build completion and understand the broader lifecycle of game development services.

The strongest partners are not just building features. They are building systems that can be improved intelligently over time.

And that is ultimately what the AI conversation should help buyers evaluate. Not whether a studio sounds modern, but whether it can help deliver a game that is better tested, better understood, and better optimized once real players enter the experience.

Conclusion: The Quiet Advantage Will Compound

AI is not becoming valuable in game development because it sounds futuristic. It is becoming valuable because it helps solve practical production problems that modern studios face every day.

In 2026, the strongest use of AI is not in replacing creative teams or turning game development into an automated theater. It is in helping teams iterate faster in level design, scale QA more intelligently, turn analytics into clearer product decisions, and run LiveOps with better timing and relevance. These are not flashy advantages, but meaningful ones, especially in mobile, online, and service-driven products, where small improvements can compound over time.

That is why the studios gaining the most from AI are often the least dramatic about it. They are not treating it as the pipeline’s identity. They are using it as a quiet layer of operational intelligence that helps the pipeline perform better.

For businesses exploring modern game development services, that is the more useful way to think about AI as well. Not as a promise that the process becomes effortless, but as a way to build stronger systems around design, testing, learning, and post-launch optimization. For teams working with a mobile game development company, that kind of practical advantage can make a real difference in retention, quality, and long-term product performance.

The quiet advantage will not come from who talks about AI the most. It will come from those who use it with the most discipline.